Player Two Has Entered the Game: Records of Processing Activities Are No Longer Just for the EU

For years, the Records of Processing Activities, or RoPA, has been the domain of European privacy teams. Article 30 of the GDPR made it a requirement for controllers and processors to maintain a detailed record of what they do with personal data, and most companies outside of Europe looked on from a distance, quietly grateful it wasn't their problem. That's all changing.

You're a Fintech. You Probably Handle Financial Data. That Could Make You a Financial Institution.

There's a line I hear fairly often when I'm talking to founders and CTOs at fintech companies: "We don't actually handle the money, so the financial regulations don't really apply to us." And I get it, and I’ll even wrestle with the definition myself. If you're building the software layer that sits on top of a payment processor, or you're aggregating transaction data to surface insights, it feels like you're one step removed from the regulatory picture. But… you’re not.

"We Just Build the Software" Isn't a Privacy Strategy

There's an assumption that runs deep in the outsourced development world: privacy is the client's problem. You're not collecting the data, you're not running the business, so the obligations must sit with them. Right?

Not quite.

Three Things on Your Product Roadmap That Should Raise a Privacy Flag

If you're managing a product roadmap, you're used to balancing speed, scope, and stakeholder expectations. Privacy, I find, tends to get slotted in somewhere near the bottom of that list, or handed off entirely to legal with a "they'll catch it." The problem is that by the time legal catches it, you're already mid-sprint, and the fix is significantly more expensive than it would have been at the planning stage.

For the Love of All Things Private, Don't Copy Your Privacy Notice

There's one thing I see regularly when working with early-stage and growth-stage software companies, and it makes me wince every single time: a copy-pasted privacy notice.

I get it. Privacy notices feel like a legal formality. You need one, you don't want to spend time or money on it, and there's a competitor or a tool you like whose notice looks pretty reasonable. So you borrow it, maybe swap out a few company names, and move on. Done.

Using AI to Write Your Privacy Policies? Here's What to Watch Out For

AI is genuinely good at writing policies. I'll say that up front, because it's true, and because the rest of this article is going to be a bit of a cautionary tale, and I don't want you walking away thinking the answer is to abandon the tool entirely.

The answer is not to abandon it. The answer is to use it properly.

When Someone Asks for Their Data Back: DSRs and Why B2B Companies Can't Ignore Them

Most software companies I work with don't think data subject access requests are their problem. They're not selling to consumers, they're not collecting marketing lists, and they're certainly not the type of company that ends up in the news for a privacy breach. And then one day, an email lands in their support inbox from someone they've never heard of, asking for all the personal information the company holds about them.

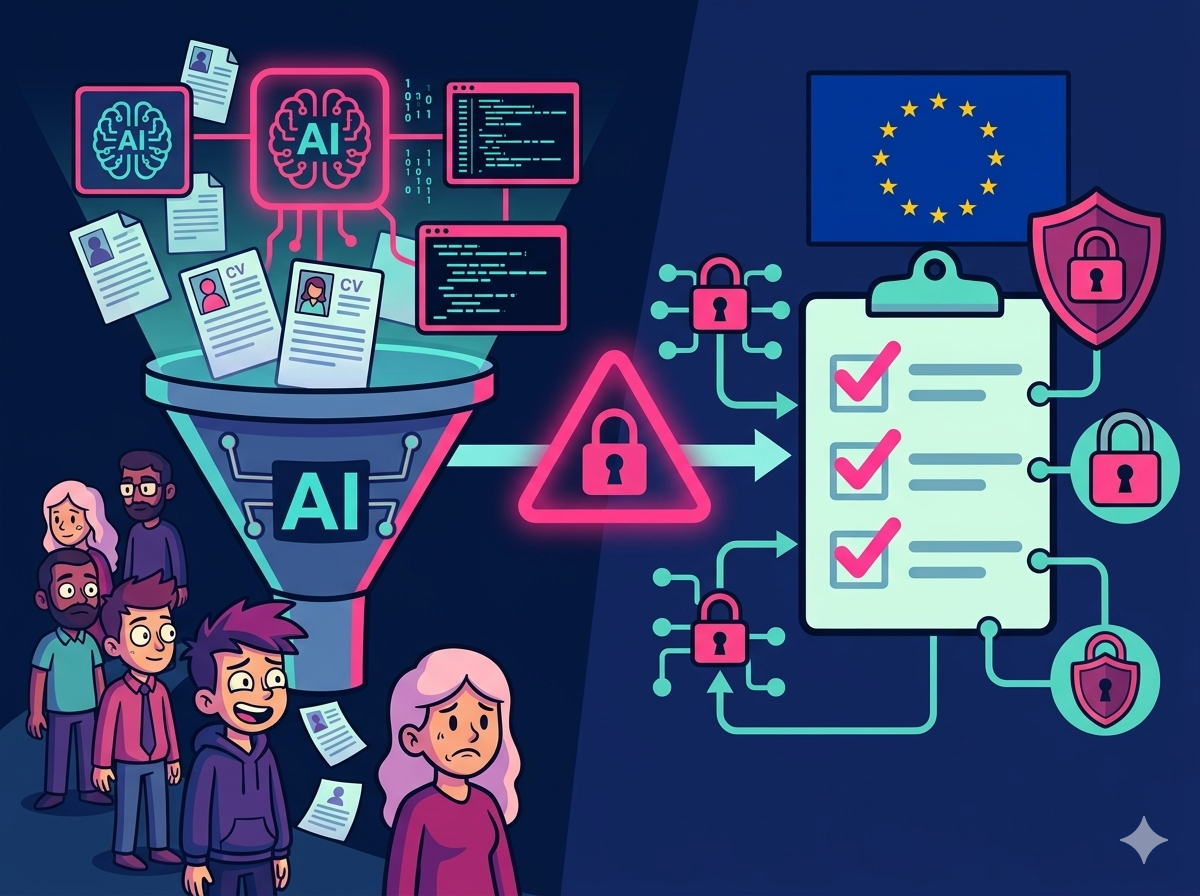

The EU AI Act Is Already in Your Hiring Pipeline

Here's a question worth sitting with for a moment: is your technical recruitment team using any AI tools right now? An applicant tracking system that filters CVs? A platform that scores candidates? A note-taking bot that summarises interviews? If the answer to any of those is yes, and you have candidates or employees in the EU, you are already operating in territory the EU AI Act cares about.

You Don't Need a Perfect Privacy Program. You Need to Start One.

There's a conversation I find myself having surprisingly often with founders and CTOs at growing tech companies. It goes something like this: "We know we probably need to look at privacy, but we're not processing that much personal data yet" or "we're not ready to take on something that big." And so the program gets shelved. Indefinitely.

The irony is that waiting until you feel "ready" is one of the riskier positions you can take.

Your Entire Team Doesn't Need Prod Access (And Your Privacy Officer Will Thank You)

Let me paint you a picture that I see far too often in growing tech companies. You've got multiple products, multiple development teams, and they all have access to production data. DevOps has keys to everything. Support can see client information whenever they need it. Dev teams can pull production databases for debugging. Everyone's happy because they can move fast and fix things quickly.

Your privacy officer, however, is having a quiet panic attack in the corner.

Your Email Inbox Is a Privacy Time Bomb (And How to Defuse It)

One of the issues I see repeatedly when working with development teams and their CTOs is the way companies treat email. Most organizations have their email configured as a permanent archive, keeping messages for the duration of someone's employment and then indefinitely thereafter "just in case we need it later."

This approach seems prudent on the surface, but from a privacy perspective, it's a ticking time bomb.

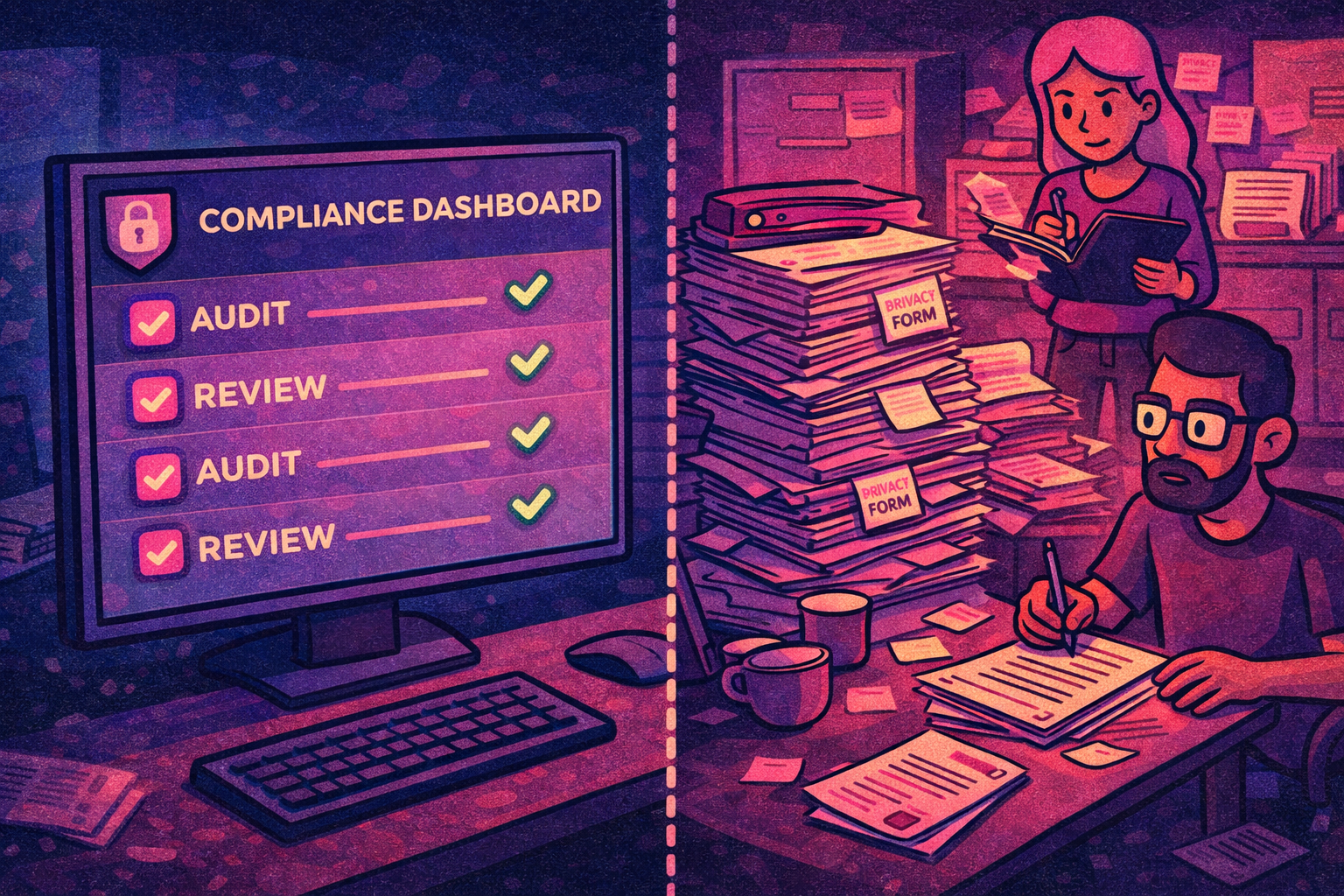

Evidence Tools Aren't a Silver Bullet for Privacy Compliance

Companies are increasingly relying on security evidence collection platforms for their SOC 2, ISO 27001, or GDPR compliance programs, assuming that if the tool says they're compliant, they must be covered. The reality is quite different, particularly for privacy.

These platforms are excellent at what they do: collecting digital evidence, tracking policies, monitoring systems, and creating audit trails. They'll plug into your cloud infrastructure, pull logs from your applications, and generate reports that auditors love to see. But here's the catch: they only see what's digital.